GeoWerkstatt-Projekt des Monats Oktober 2022

Projekt: High precision pose estimation of a UAV by integrating camera and laser scanner data with building models.

Forschende: Mehrnoush Mohammadi

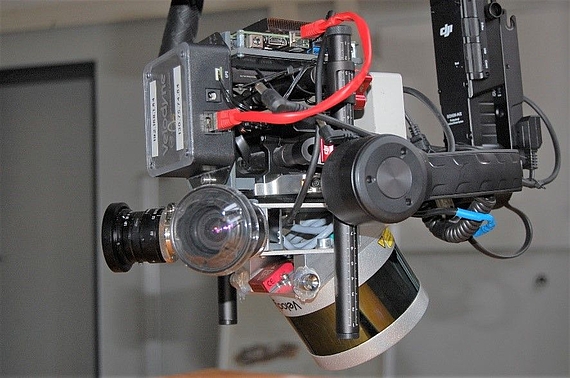

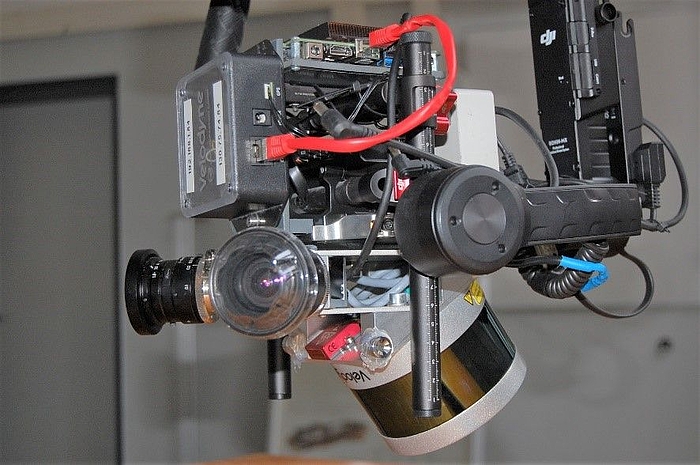

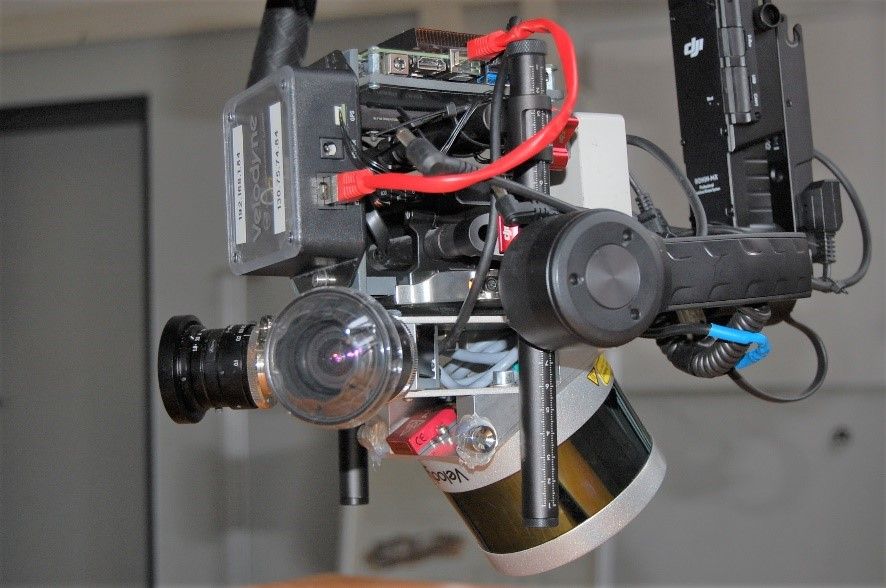

Projektidee: Existing building models can be used to stabilize the orientation of UAVs that have cameras and laser scanners on board.

Unmanned aerial vehicles or UAVs are being utilized more and more everyday due to their flexibility in surveying such as land inspection, monitoring and 3D reconstruction. In most scenarios, a camera is the primary onboard sensor due to being economically more efficient. However, nowadays due to the need for a higher accuracy, especially in urban areas, methods that combine data from multiple sensors are becoming more and more popular. The aim of this project is to determine the UAV’s flight path (trajectory) with centimeter accuracy and high frequency by integrating the measurement data from the onboard camera and laser scanner. For this integration, the sensors must be time-synchronized, i.e. time stamps must be known for the gathered data from each sensor, and their shift and rotation with respect to the other sensors must be known – the system has to be externally calibrated.

Our algorithm is a hybrid adjustment, which integrates laser scanner measurements in the bundle block adjustment. The bundle block adjustment is a concept well-known in photogrammetry and computer vision. It works with bundles of images only, that can simultaneously optimize the 3D object coordinates, the internal calibration parameters of the camera and the pose of the sensor. Basically, bundle adjustment works with points visible in different images, which show the same object or scene from positions and perhaps also different viewing directions. These points in the different images are used to tie the images together and to determine their 3D coordinates.

©

IPI

©

IPI

Laser scanner data consist of thousands of individual points and are therefore called point clouds. To add laser points to our model, we detect planes in the laser point cloud and find the corresponding 3D coordinates of the tie points, which are estimated from their image coordinates. These tie points must lie on the plane defined by the laser point clouds.

To find the trajectory, we define support points in (almost) regular time intervals on the trajectory. By means of interpolation, we can then determine the trajectory between these support points at any instance in time. The novelty of this work lies in the mathematical model, in which the laser scanner and camera data fusion is described.

To compute world coordinates for the trajectory, ground control points (GCPs) are normally used, which are object points in the scene with known image and world coordinates. However, it is time and energy-consuming to set them and acquire their accurate position every time in a new field. Using satellite signals (GNSS/IMU solution) to find the position and relative orientation of the UAV during flight is usually not possible in an urban environment: When the UAV flies in a dense building zone, the satellite signals do not reach the antenna or they are not accurate enough due to the restrictions in the flight. On this account, building models were chosen for our urban scenarios. These models are freely available in level of detail 2 (LOD2) from Lower Saxony administration of Geoinformation and land surveying (LGLN).

This project is carried out in cooperation with Geodetic Institute Hannover (GIH), funds came from DFG.